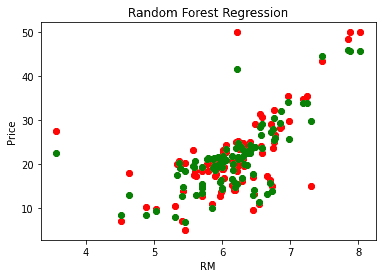

Plt.plot(X_grid, regressor.Changed in version 0.18: Added float values for fractions. X_grid = X_grid.reshape((len(X_grid), 1)) # reshape for reshaping the data into a len(X_grid)*1 array, # Visualising the Random Forest Regression results Y_prediction = regressor.predict(np.array().reshape(1, 1)) # test the output by changing values Regressor = RandomForestRegressor(n_estimators = 100, random-state = 0) # Fitting Random Forest Regression to the datasetįrom sklearn.ensemble import RandomForestRegressor Step 4 : Fit Random forest regressor to the dataset Step 3 : Select all rows and column 1 from dataset to x and all rows and column 2 as y You will be using a similar sample technique in the below example.īelow is a step by step sample implementation of Rando Forest Regression. At this stage you interpret the data you have gained and report accordingly.If it doesn’t satisfy your expectations, you can try improving your model accordingly or dating your data or use another data modeling technique.Now compare the performance metrics of both the test data and the predicted data from the model.Provide an insight into the model with test data.Set the baseline model that you want to achieve.Specify all noticeable anomalies and missing data points that may be required to achieve the required data.Make sure the data is in an accessible format else convert it to the required format.Design a specific question or data and get the source to determine the required data.We need to approach the Random Forest regression technique like any other machine learning technique We randomly perform row sampling and feature sampling from the dataset forming sample datasets for every model. Random Forest has multiple decision trees as base learning models. The basic idea behind this is to combine multiple decision trees in determining the final output rather than relying on individual decision trees. This part is Aggregation.Ī Random Forest is an ensemble technique capable of performing both regression and classification tasks with the use of multiple decision trees and a technique called Bootstrap and Aggregation, commonly known as bagging. In the case of a regression problem, the final output is the mean of all the outputs. In the case of a classification problem, the final output is taken by using the majority voting classifier. Principal Component Analysis with PythonĮvery decision tree has high variance, but when we combine all of them together in parallel then the resultant variance is low as each decision tree gets perfectly trained on that particular sample data and hence the output doesn’t depend on one decision tree but multiple decision trees.Introduction to Dimensionality Reduction.Genetic Algorithm for Reinforcement Learning : Python implementation.Reinforcement Learning Algorithm : Python Implementation using Q-learning.Implementing Agglomerative Clustering using Sklearn.Hierarchical clustering (Agglomerative and Divisive clustering).OPTICS Clustering Implementing using Sklearn.Implementing DBSCAN algorithm using Sklearn.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed